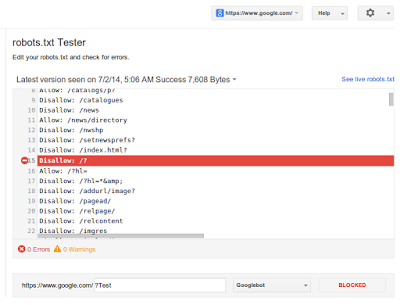

The robots.txt file is the file which directly affects the crawling and indexing of the URLs on the site. It is the first file the bots like to read while crawling any site.Google WMT had a Block URLs section which showed the robots.txt file but now If Google isn't crawling a page , the robots.txt tester (Known as Blocked URLs earlier) , located under the Crawl section of Google Webmaster Tools, will let you test whether there's an issue in your file that's blocking Google.

According to an update on http://googlewebmastercentral.blogspot.in/2014/07/testing-robotstxt-files-made-easier.html :

In the robots.txt testing tool in Webmaster Tools you'll see

- The current robots.txt tester, and can test new URLs to see whether they're being crawled or disallowed.

- Guide your way through complicated directives, it will highlight the specific one that led to the final decision.

- You can make changes in the file and test those too.

- Be able to review older versions of your robots.txt file, and see when access issues block crawling. For example, if Googlebot sees a 500 server error for the robots.txt file, we'll generally pause further crawling of the website.

- Since there may be some errors or warnings shown for your existing sites, we recommend double-checking their robots.txt files.

- You can also combine it with other parts of Webmaster Tools: for example, you might use the updated Fetch as Google tool in WMT to render and submit important pages on your website.

- If any blocked URLs are reported, you can use this robots.txt tester to find the directive that's blocking them, and, of course, then improve that.

- A common problem we've seen comes from old robots.txt files that block CSS, JavaScript, or mobile content — fixing that is often trivial once you've seen it.